The Parallel Structured Light approach for 3D sensing aims to fill a critical gap among 3D sensing solutions, enabling a whole new range of machine vision applications and tasks in the process.

|

Listen to this article

|

Vision-guided robots help manufacturers, logistical companies, and other sectors improve their order fulfillment processes and achieve better productivity and higher profits. What an automation system is capable of largely depends on the type of machine vision it is equipped with.

Vision-guided robots help manufacturers, logistical companies, and other sectors improve their order fulfillment processes and achieve better productivity and higher profits. What an automation system is capable of largely depends on the type of machine vision it is equipped with.

3D Machine Vision Systems

2D machine vision systems provide two-dimensional images without depth information. Thus they are only suitable for simple applications such as barcode reading, character recognition, dimension checking, or label verification.

2D machine vision systems provide two-dimensional images without depth information. Thus they are only suitable for simple applications such as barcode reading, character recognition, dimension checking, or label verification.

Conversely, 3D machine vision systems enable much more complex robotic tasks. Because they provide three-dimensional point clouds with precise X, Y, and Z coordinates, 3D vision technologies enable robotic systems to recognize objects more accurately, as well as pick them up and and place them at another location such as a conveyor belt for further processing. Advanced 3D machine vision systems are also well suited for quality control and inspection, detection of surface defects, and other tasks that require depth information.

Many 3D Choices

The market offer of 3D vision systems is fairly rich. There is a number of technologies that rule the 3D vision market, all falling within one of the two major categories: Time-of-Flight methods powering ToF area scan and LiDAR devices, and triangulation-based methods comprised of laser triangulation or profilometry, photogrammetry, stereo vision, and structured light systems.

The trade-off between quality and speed limits the range of applications that can be automated and positions customers before an unsatisfactory compromise

Moving Objects

Each of these 3D sensing methods is based on a different principle, providing specific advantages but also having its drawbacks, which makes each one suitable for a different type of applications. And while some technologies have improved greatly over the past years and pushed 3D sensing to a higher level, one limitation remains that none of them has been able to overcome. This is their inability to capture the entire surface areas of moving objects and make their 3D reconstruction in high quality, without motion artifacts.

The trade-off between quality and speed limits the range of applications that can be automated and positions customers before an unsatisfactory compromise. For instance, ToF vision systems are very fast but the resolution of the output 3D data is rather poor. On the other hand, structured light systems provide high resolution and accuracy but for the price of lower speed as the scene needs to be static during the acquisition process.

It once appeared that limitation of area-scan 3D cameras could not be overcome. That is, until recently when a new approach to 3D sensing was introduced.

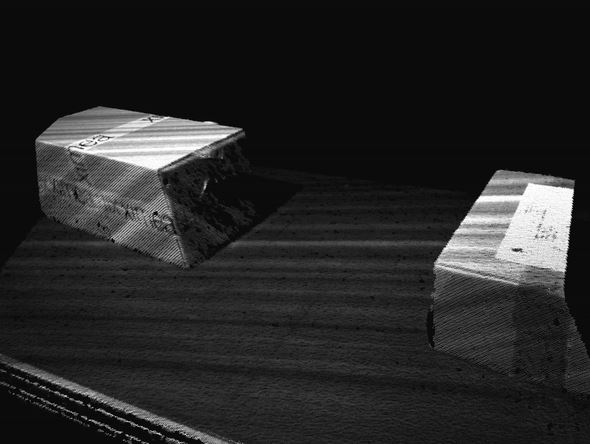

“Parallel Structured Light” systems are able to capture, recognize, and localize moving objects – such as boxes, food items, or shapeless objects coming on a conveyor belt – and enable precise robot navigation so that the robot can pick them or perform other object handling tasks.

Filling the Gap in 3D Sensing

The new technology is called “Parallel Structured Light” and was introduced by the company Photoneo. The method utilizes structured light but in a fundamentally different way than structured light systems.

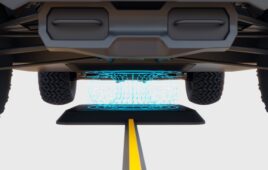

While structured light vision systems project one or more coded structured light patterns onto a scene in multiple frames, for which the scene needs to be static at the moment of acquisition, the “Parallel Structured Light” technology so to say “freezes” the scene to acquire multiple virtual images of it from one single shot of the sensor.

Parallel Structured Light

The novel method is implemented by a special CMOS image sensor with a mosaic pixel pattern. In contrast to the structured light method, “Parallel Structured Light” systems have the laser coming from a projector on the entire time. What is turned on and off during the exposure window are the individual pixels of the sensor.

The novel method is implemented by a special CMOS image sensor with a mosaic pixel pattern. In contrast to the structured light method, “Parallel Structured Light” systems have the laser coming from a projector on the entire time. What is turned on and off during the exposure window are the individual pixels of the sensor.

The pixel modulation thus happens directly in the sensor and not in the projection field, as is the case with the structured light method. This means that the individually coded light patterns are sampled in the sensor at one point in time and in one frame, allowing the capture of objects that are in motion.

This new approach enables a whole new range of machine vision applications and tasks that can be automated. Robotic object handling, bin picking, sorting, machine tending, palletization and depalletization tasks, or quality control, inspection, and metrology are no longer limited to static scenes. “Parallel Structured Light” systems are able to capture, recognize, and localize moving objects – such as boxes, food items, or shapeless objects coming on a conveyor belt – and enable precise robot navigation so that the robot can pick them or perform other object-handling tasks.

In the case of Photoneo, Parallel Structured Light technology is implemented in a 3D camera named MotionCam-3D. This system enables the capture of objects moving up to 140 km/h, delivering a point cloud resolution of 0,9 Mpx (and 2Mpx in the static mode).

The automation of tasks that require the recognition of moving objects is on the rise and penetrates all sectors, including manufacturing, automotive, logistics, e-commerce, medical, and other industries. The high-quality capture and 3D reconstruction of dynamic scenes has been a massive challenge until recently, but technological advancements and thirst for innovation gave rise to new classes of robotic capabilities. Let’s see what comes next.

Editor’s Note: This article was republished (with alterations) with permission from the Photoneo. The original can be found HERE.

About the Author

Andrea Pufflerova is public relations specialist at Photoneo and writer of technological articles on smart automation solutions powered by robotic vision and intelligence.

Andrea Pufflerova is public relations specialist at Photoneo and writer of technological articles on smart automation solutions powered by robotic vision and intelligence.